Data Infrastructure as a Strategic Capital Asset in the AI Era

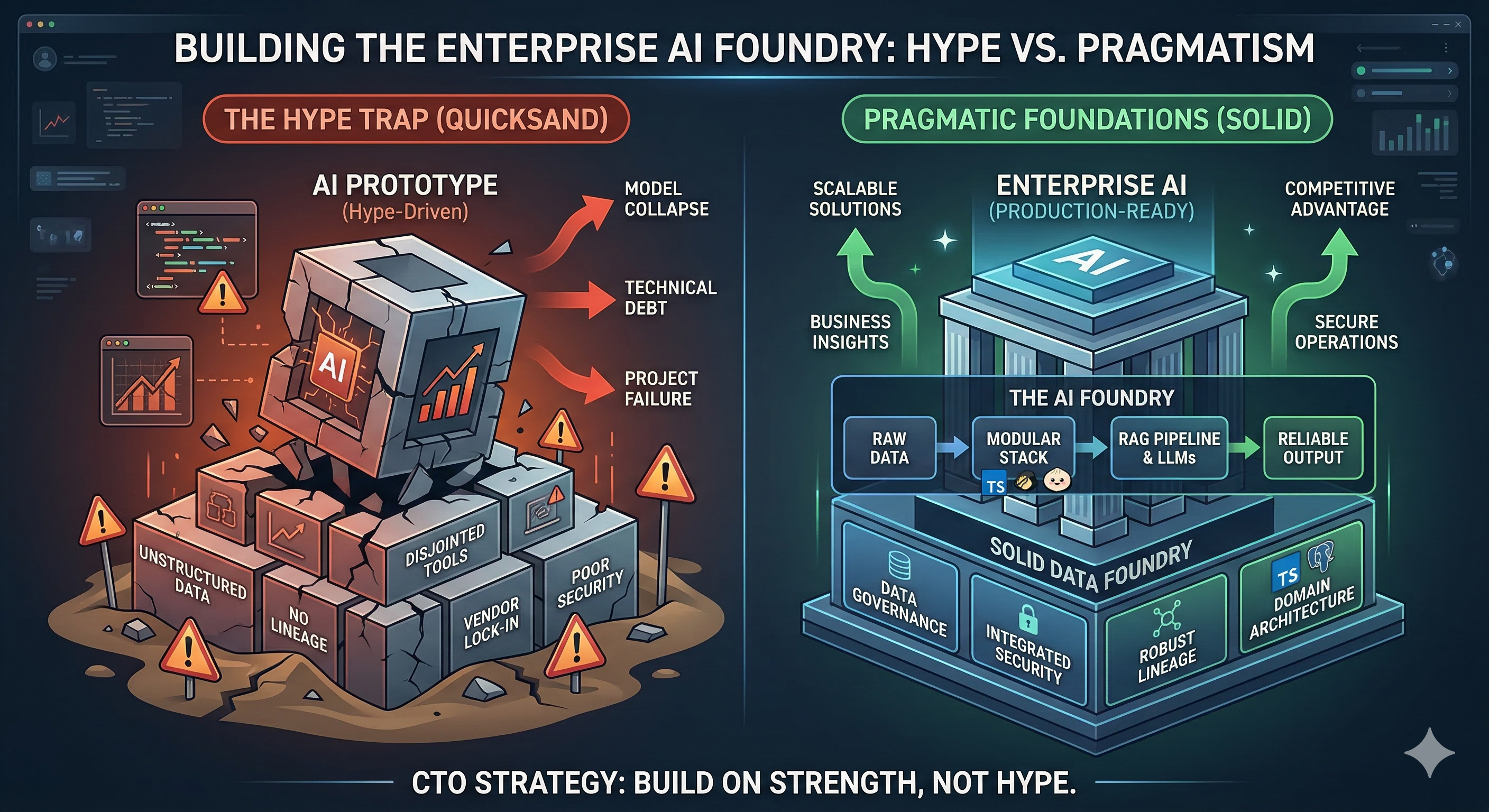

In the current investment landscape, the "AI-ready" label has become a commodity, often masking significant technical debt. For technology-enabled enterprises and B2B SaaS companies, a critical divide has emerged between organizations with fragmented "data silos" and those with a Strategic Data Moat. For CEOs and investment committees, the data platform is no longer a cost center to be managed; it is the primary engine for margin expansion, operational leverage, and valuation defensibility.

As the industry shifts from experimental AI to production-grade intelligence, the winner is determined by the underlying architecture. The most resilient organizations are moving away from monolithic, proprietary solutions toward an unbundled architectural philosophy. This isn't merely a technical preference; it is a strategic maneuver to ensure agility, auditability, and long-term ownership of the company's most valuable intellectual property.

I. The "Unbundled" Paradigm: Engineering for Multiples

The foundational shift in modern data strategy is the move toward what is termed the "unbundled database." As documented in the seminal work Designing Data-Intensive Applications (Kleppmann, 2017), a modern data platform should be viewed as a distributed database where the traditional components—storage, processing, and indexing—are separated into specialized, interoperable subsystems.

From an investment perspective, this unbundled approach is a direct hedge against Vendor Lock-in Risk. By utilizing open standards—such as Apache Iceberg for table formats and Apache Kafka for the event backbone—an organization ensures that its core data assets are portable. This decoupling ensures that the company’s "architectural moat" is a proprietary asset that can be moved across cloud providers or integrated during M&A activity without catastrophic re-platforming costs.

II. The Immutability Mandate: Governance as Risk Mitigation

High-integrity AI requires that inputs are never modified. In this framework, the platform adopts an "append-only" architecture where every change is recorded as a new event, and historical data is never overwritten. This Immutability Mandate provides two critical strategic advantages for the board:

- Auditability & Regulatory Compliance: In increasingly regulated markets, the ability to "time-travel"—reconstructing the exact state of the business at any historical point—is shifting from a technical perk to a legal requirement. If an AI model makes a loan decision or a pricing adjustment, the organization must be able to prove exactly what data was used at that millisecond.

- Predictive Integrity: Immutability eliminates "training-serving skew" and "data leakage." These are common failures where AI models are accidentally trained on information that wouldn't have been available at the time of prediction. By enforcing immutable logs, companies ensure that their AI performance metrics are grounded in reality, not architectural artifacts.

III. The Semantic Ontology: The API for the Enterprise

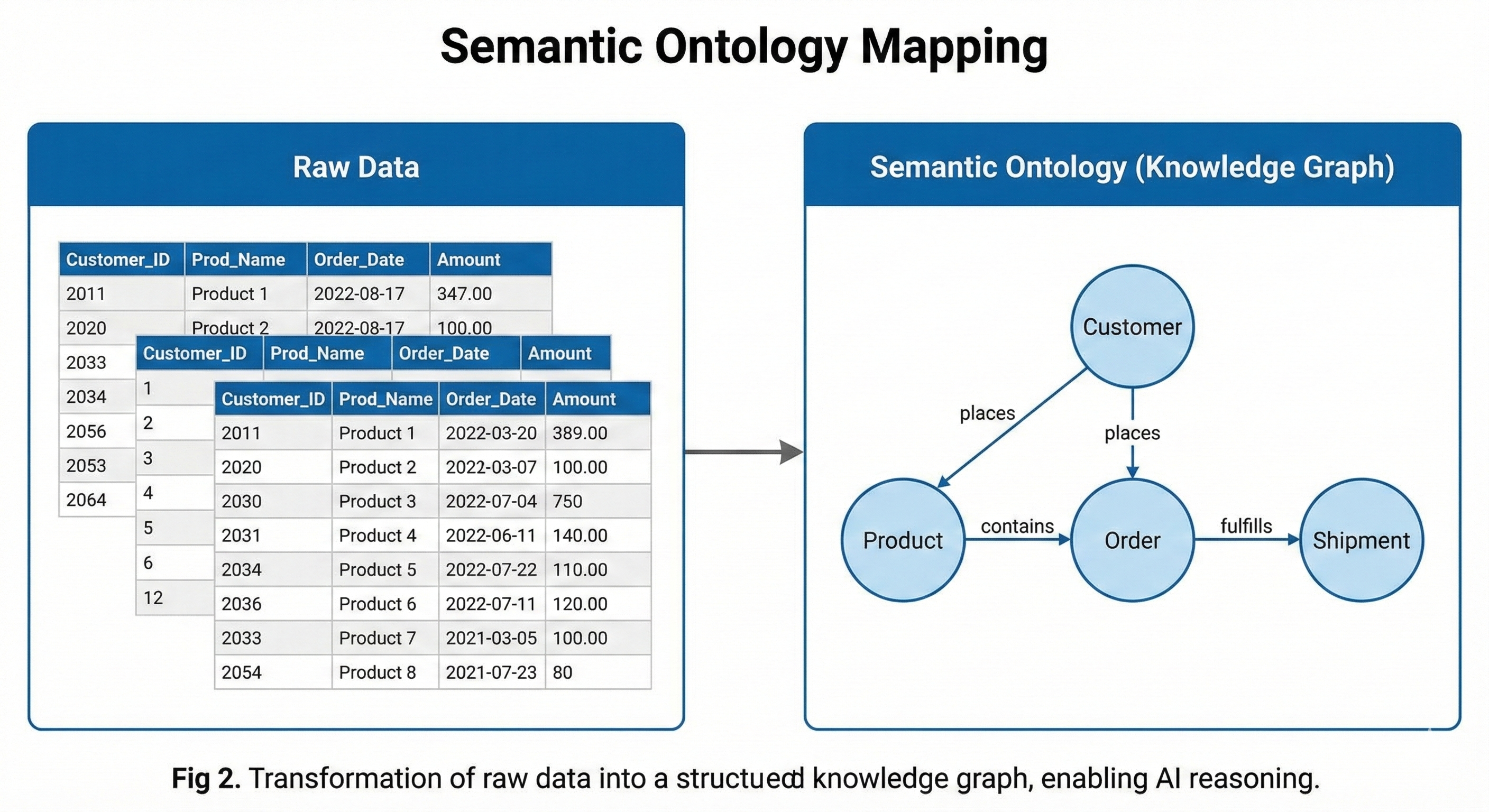

One of the greatest barriers to AI ROI is the "Context Gap." Raw data stored in flat tables is often incomprehensible to Large Language Models (LLMs) or reasoning agents without massive amounts of manual prompt engineering.

To bridge this gap, leading firms are adopting a Semantic Ontology—a concept popularized by the architectural standards of Palantir Foundry (2024). Instead of looking at "Table_A" and "Table_B," the AI interacts with a graph of typed entities: Customers, Products, Transactions, and Logistics.

This ontology acts as a digital twin of the business logic. For a CEO, this is the "Strategic Interface." It allows for a "Plug-and-Play" AI strategy where new models can be swapped in or out because they are all mapped to the same stable, high-fidelity vocabulary of the business. This structure is what allows a company to move from simple automation to complex, cross-functional reasoning.

<br>

IV. Scaling Value: Data as a Product (DaaP)

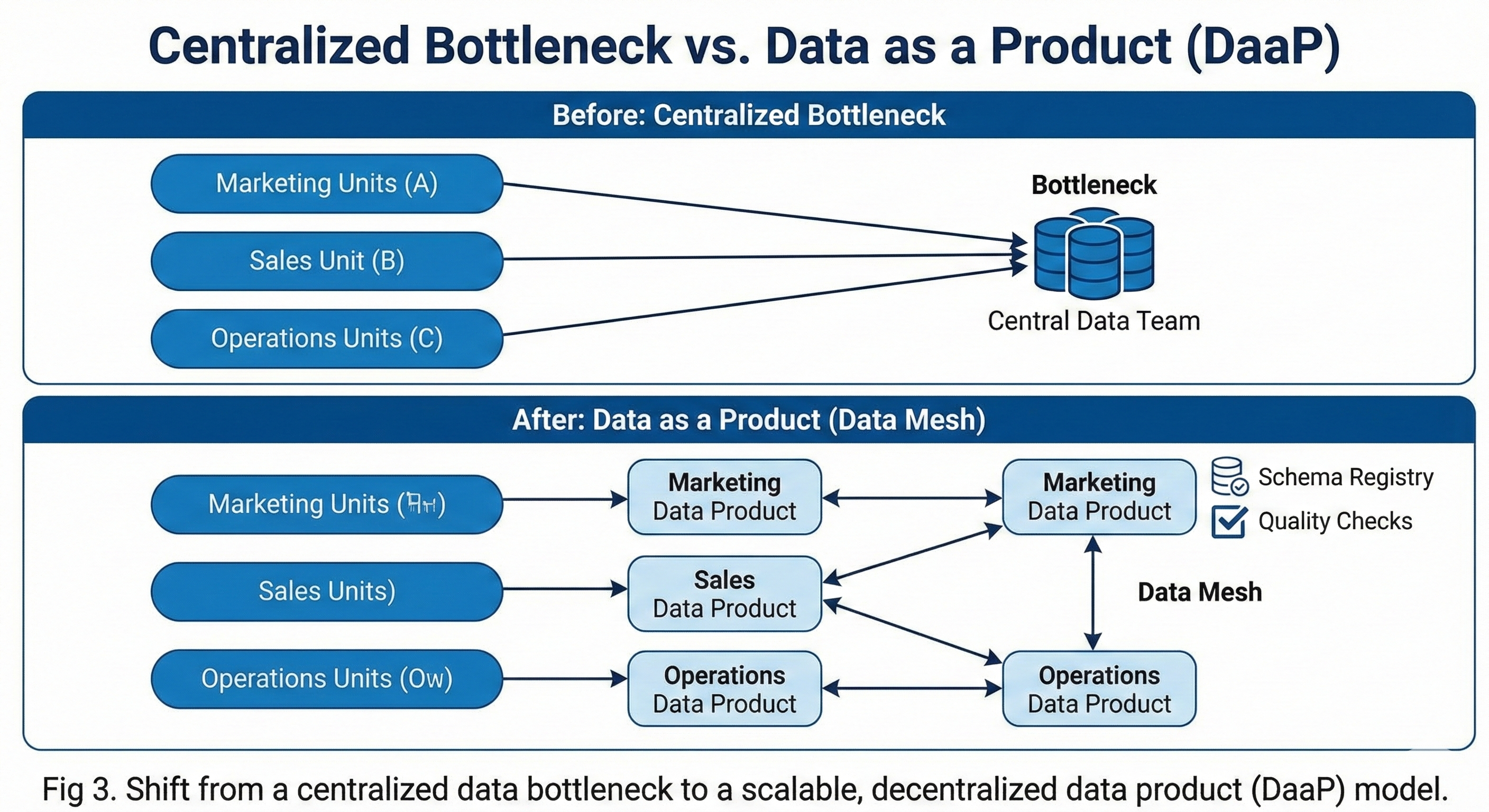

For a SaaS or tech-enabled company to scale, it must solve the "Data Bottleneck." Traditionally, central data teams act as service desks, creating a linear dependency that slows down product velocity and increases overhead. The Data as a Product (DaaP) framework decentralizes this responsibility, treating data with the same rigor as a customer-facing API.

<br>

1. Decentralized Ownership and Domain Autonomy

Under this model, individual business units (e.g., Marketing, Logistics, R&D) own their data end-to-end. They are responsible for its quality, its schema, and its uptime. This removes the central IT bottleneck and allows the organization’s intelligence capabilities to scale in parallel with its headcount. From a PE perspective, this increases the Operating Leverage of the firm; the data infrastructure becomes a force multiplier rather than a headcount driver.

2. Schema Enforcement as a Contractual Guarantee

In a DaaP model, schemas are not suggestions; they are contracts. Utilizing versioned schema registries (Kleppmann, 2017) ensures that when a producer updates a dataset, they do not silently break the downstream AI models or analytics dashboards that rely on it. This "Contract-Driven Development" ensures that the data platform remains a reliable foundation for decision-making, rather than a "data swamp" requiring constant manual intervention.

V. Strategic Implications for the Board and Investors

For the technology investment arm, the quality of the data architecture is a leading indicator of a company’s ability to sustain its margins and defend its market share.

| Strategic Metric | Legacy "Data Lake" Model | Unbundled DaaP Platform |

|---|---|---|

| Agility / Time-to-Insight | Weeks (due to ETL & central backlogs) | Minutes (via self-service data products) |

| AI Reliability | Low (susceptible to data drift and skew) | High (via immutable, versioned features) |

| Exit Valuation | Discounted (due to technical debt) | Premium (due to portable, IP-owned assets) |

| Regulatory Risk | High (opaque data flows and silos) | Low (full lineage and time-travel) |

VI. The Executive Bottom Line: Execution over Tools

The hardest part of building an AI-ready platform is not selecting the right vendor—it is driving the organizational alignment required to treat data as a first-class citizen.

The role of the technology executive in 2026 is part architect and part cultural transformation leader. It requires moving the conversation from "Which AI tool should we buy?" to "How do we build a system that can evolve for the next decade?"

The organizations that win this decade will not be those with the most raw data, but those with the most accessible, reliable, and structured intelligence. Building that intelligence begins with an architecture that respects the principles of immutability, unbundling, and semantic clarity. This is the foundation of the modern architectural moat.

Need help implementing these strategies?

AlphaAnalytix partners with CTOs to turn these concepts into competitive advantages.

Contact Our Team